Top 5 Data Cleaning Tools Compared

Here we discuss some of the top data cleaning tools you can use to streamline your data management process.

Here we discuss some of the top data cleaning tools you can use to streamline your data management process.

Data cleanliness ensures that the information we’re using to drive decision making and deepen our understanding is, well, trustworthy. Data doesn’t often (if ever) come to a business in a neat package. It’s usually integrated across a myriad of sources and exists in a variety of different formats.

Cleaning data is a necessary task, but trying to do this manually is a.) cumbersome and b.) unscalable. Which makes investing in the right data cleaning tools all the more important. Below, we give a rundown of a few options for data cleaning tools.

OpenRefine (formerly Google Refine) is an open-source data cleanup tool that handles very large datasets. Using clustering algorithms, OpenRefine spots data inconsistencies, outliers, and errors, removing much of the manual work involved in reviewing data entries.

Although OpenRefine’s user interface is similar to a typical relational database, new users may face a moderate learning curve, depending on their technical skills. However, there are lots of free tutorials on university websites, data organizations’ blogs, and YouTube – plus OpenRefine’s own user manual, of course.

OpenRefine runs locally, making it ideal for companies that require data to be kept and processed on premises.

Alteryx (formerly Trifacta Wrangler) is a data cleaning tool with plenty of connectors, allowing you to extract records from multiple sources. This lets you centralize your data, making it easier to find inconsistencies and duplicates even if it’s gathered at different touchpoints. Once you have clean data, you can send it downstream to your data lake or to a business intelligence tool like Tableau using Alteryx’s connectors.

Teams with large datasets of both structured and unstructured data can use Alteryx to speed up data cleaning. Alteryx’s data cleanup tool is ideal for retail applications. It can be integrated with Alteryx’s geospatial analytics tool to enrich analysis of retail sales, customer footprint, and ad area distribution.

WinPure Clean & Match is a beginner-friendly data cleanup tool that requires no special training. It uses standard and proprietary algorithms to match data and suggest items for deduplication. The tool automatically generates visual data-matching reports, such as duplicates due to spelling errors, acronyms, and different input formats.

WinPure runs on your own computer, so it’s ideal for companies that require on-premise processing, either due to the small scale of data or because of tight regulations.

IBM InfoSphere QualityStage is part of a broader data integration platform by IBM. It’s designed for big data applications, particularly for business intelligence purposes. It speeds up cleaning by running QA rules during data ingestion and transformation and before loading into the data warehouse. It also has more than 250 built-in data classes like credit card and taxpayer ID.

You can run IBM InfoSphere QualityStage either on-premises or on a public or private cloud.

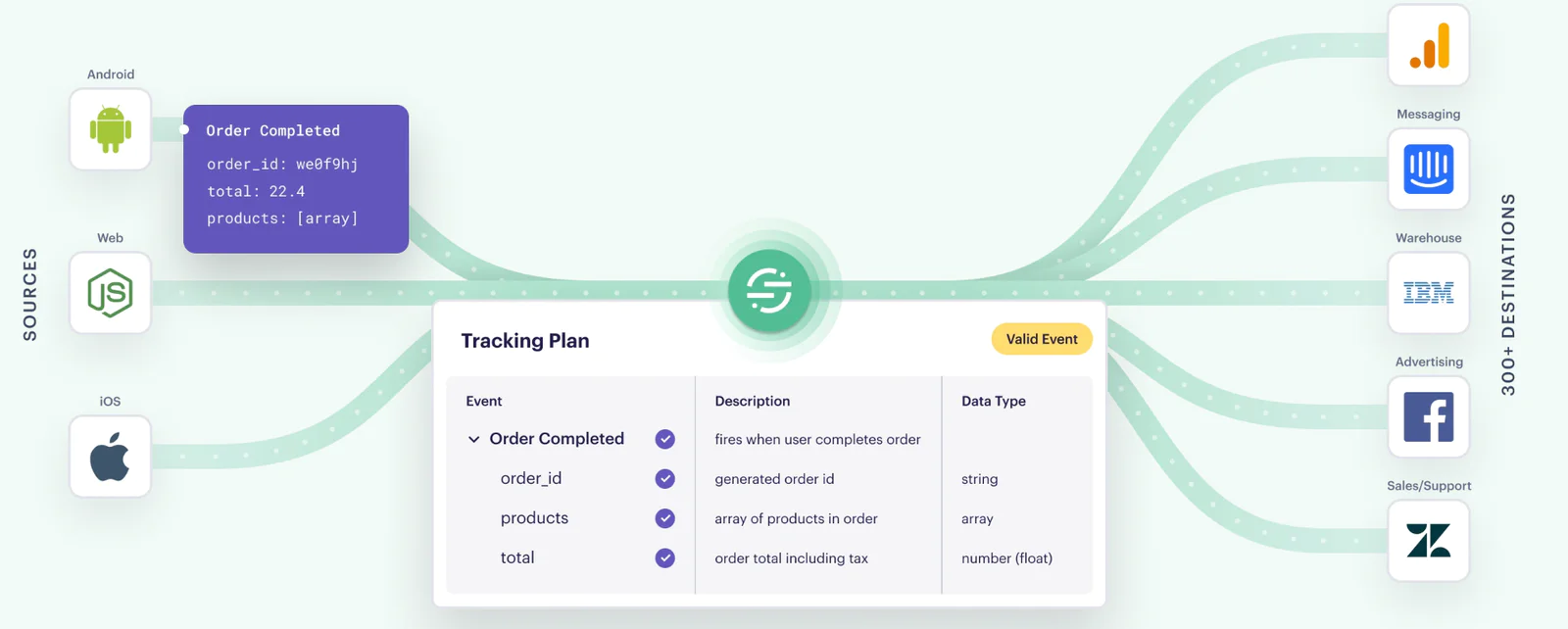

Segment Protocols helps automate data governance at scale in a myriad of ways. First, it automates the process of ensuring each event adheres to the company’s overarching tracking plan. In doing so, it’s able to proactively block and flag bad data before it’s integrated in downstream tools, as well as automatically transform data so that it matches the company’s standardized naming conventions (e.g., OrderCompleted, not order_completed).

Twilio Segment comes with over 450 pre-built integrations, along with the ability to set up custom Sources and Destinations. Segment also offers key protections like data masking, role-based authorization, and regional data processing to remain GDPR compliant, along with being a HIPAA-eligible platform.

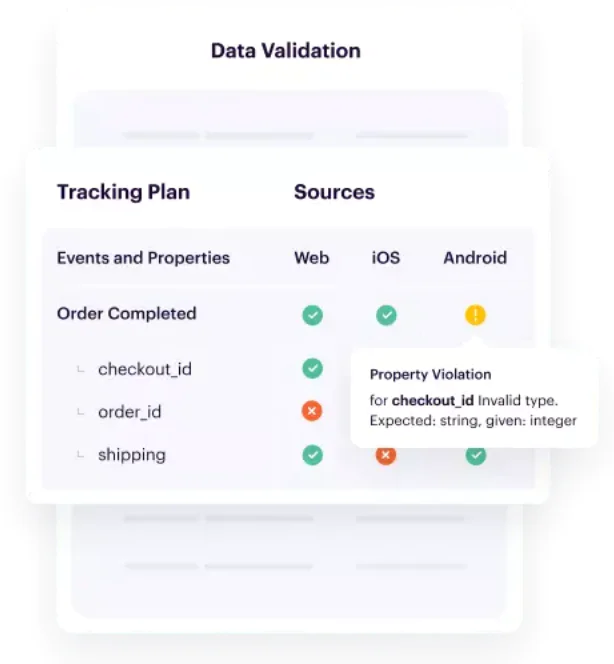

When a company grows, the types of data it collects evolve and increase. It’s often difficult to enforce data standards consistently, especially when different teams are extracting information from many sources. A tool like Protocols helps by automatically enforcing your tracking plan and flagging violations before they pollute your database.

Typeform, a SaaS company that makes software for building online forms and surveys, was growing fast. As a result, it was collecting more data across the organization but wasn’t able to enforce its standards across all collection touchpoints. The result was lots of duplicated and incompatible data – leading to lower-quality insights for the product, marketing, and customer success teams.

Typeform solved their problem by leveraging Segment to centralize their data and enforce their data governance strategy across the company. This helped Typeform:

Consolidate tracked events from 200 to 50, reducing software costs and freeing up bandwidth.

Validate data in real-time and automatically apply transformations, preventing low-quality data from entering their database.

Automate marketing workflows based on trusted customer data by connecting Segment to downstream tools.

Learn more about how Protocols can help you enforce data standards across your organization and make decisions based on trusted, high-quality data.

Connect with a Segment expert who can share more about what Segment can do for you.

We'll get back to you shortly. For now, you can create your workspace by clicking below.

Data cleaning is the process of identifying, transforming, or removing incorrect, incomplete, invalid, and duplicate data within a dataset.

Look for a data cleaning tool that lets you build and automate rule-based QA, provides built-in and customizable rules, and detects duplicates and errors in your dataset with high accuracy. To break down data silos and empower users to generate insights and activate data downstream, choose a tool that is accessible to both business and technical users.

Segment Protocols offers a free version for users with up to two data sources, one data warehouse destination, and up to 500,000 reverse ETL records per month.

Segment’s Protocols feature improves your data hygiene by letting you enforce your tracking plan across your organization. Segment uses the tracking plan as a basis for automated, rule-based QA. It flags violations and identifies duplicates across your dataset.

Enter your email below and we’ll send lessons directly to you so you can learn at your own pace.